I Built an AI Swing Analyzer – Here’s What I Learned

Over the past few weeks, I built and deployed a simple AI-powered swing tracker for athletes. You can try it here: https://huggingface.co/spaces/danieljordanviray/athlete-angle

What It Does

The tool takes in a video of your swing—golf, baseball, or any sport involving rotational movement—and analyzes your form. Using AI pose detection, it tracks your joints, calculates the angles between your shoulders and hips relative to the ground, and overlays that data onto the video. The goal is to give athletes a sense of how their form aligns with an ideal “perfect swing.”

Why I Built This

I’ve been practicing golf lately, and it’s humbling. According to ChatGPT (and anyone who’s tried), golf is one of the hardest sports to master. The difference between a great shot and a terrible one can come down to a 1° difference in hip rotation or shoulder tilt. That level of precision intrigued me.

At the same time, I’ve been exploring computer vision and image recognition as I level up my AI engineering skills. This project felt like the perfect way to combine the two: use AI to analyze swings with the goal of helping athletes adjust and improve.

How It Works

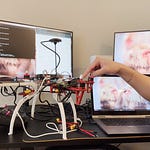

Under the hood, the app uses MediaPipe, Google’s open-source human pose estimation model, to track joints in the uploaded video. From there, I use some basic vector math to calculate joint angles—specifically how the hips and shoulders are rotating during the swing.

The processed video then plays back on the web with labeled angles and overlays, and users can download the analyzed result.

Known Limitations

Right now, the app only works reliably under specific conditions:

The video must be front-facing

The athlete must be a right-handed swinger

That’s because the entire angle calculation logic is based on this assumed setup. Supporting other cases—like left-handed swings, side-angle footage, poor-quality videos, or different lighting environments—would require writing much more generalized, robust code. That means more time, more testing, and potentially, more money.

And that’s the key point: to justify that level of investment, you’d need to truly believe in the product and the value it brings to users. Without that conviction, it’s hard to justify polishing it to a commercial-grade level. Especially for a solo builder.

What Broke (And How I Fixed It)

The most annoying problem I ran into? Video rotation. For some reason, some videos are automatically rotated in Python depending on their metadata, and others aren’t. This messed up both the angle calculations and the placement of labels, since I was measuring angles relative to the ground (horizontal), and a misinterpreted rotation could throw everything off. I hacked around it for now, but it’s not elegant or foolproof.

There were also codec and playback quirks depending on the browser or platform, but I won’t get into those here.

Shipping to the Web

Deploying this app was part of the goal. I wanted to get practice pushing a project all the way to the finish line. Even if it’s not perfect, I wanted it live.

So I used HuggingFace Spaces and Streamlit to deploy it on the web. Most of the deployment code came from ChatGPT, but I stitched it together to make a usable experience: upload a video, analyze it, watch the output online, and download it.

There are still bugs—especially around video orientation—but it works. It’s public. And that’s progress.

Reflections on Shipping Product

Here’s what I took away from the experience:

Shipping is mostly bug fixing. The actual AI and math weren’t hard. The hard part was debugging inconsistent behavior and making sure everything worked smoothly for users.

I only want to ship things I’d use. I’m not motivated to polish and generalize code for a product I wouldn’t pay for myself. I don’t want to spend serious time on a toy app. There are plenty of existing swing analyzers out there—many far more sophisticated than mine—but I wouldn’t use them either because they’re clunky or unclear in their value. Unless I were a professional athlete, I wouldn’t want to pay for one.

Precision matters. Sports motion is incredibly nuanced. For a tool like this to be truly useful, it needs to be very accurate and reliable. A rough estimate isn’t helpful—it’s just noise. I don’t want to build noisy tools. I want to build things that are actually useful.

General-purpose tools are expensive to build. Supporting more edge cases and making the tool truly general (left-handed swings, various camera angles, noisy footage) would take serious development effort. Which means, again, the product better be worth it—for me and for users.

Final Thoughts

Even if it’s not a breakthrough product, this was a valuable learning experience. I practiced full-stack AI development: building, debugging, deploying, and thinking about what makes a product worth polishing. The next step? Building something with real impact.

Until then, if you’re curious, give the swing tracker a try—and let me know what you think.

→ Try it here: Athlete Angle – AI Swing Analyzer